Toward Grounded Commonsense Reasoning

We equip robots with commonsense reasoning skills by enabling them to actively gather missing information from the environment.

Consider a robot tasked with tidying a desk with a meticulously constructed Lego sports car. A human may recognize that it is not appropriate to disassemble the sports car and put it away as part of the "tidying". How can a robot reach that conclusion without human supervision? Although large language models (LLMs) have recently been used to enable commonsense reasoning, grounding this reasoning in the real world has been challenging. To reason in the real world, our key insight is that robots must go beyond passively querying LLMs and actively gather information from the environment that is required to make the right decision. We propose an approach that leverages an LLM and vision language model (VLM) to help a robot actively perceive its environment to perform grounded commonsense reasoning. To evaluate our framework at scale, we release the MessySurfaces dataset which contains images of 70 real-world surfaces that need to be cleaned.

Key Insight

Tapping into an LLM's commonsense reasoning skills in the real-world requires the ability to ground language -- an ability that might be afforded by vision-and-language models (VLMs). However, a fundamental limitation is the image itself might not contain all the relevant information if the object is partially occluded or if the image is too zoomed out. Here are some examples:

To address limitations of VLMs, robots will need to go beyond passively querying LLMs and VLMs to obtain action plans. Our insight is that robots must reason about what additional information they need to make appropriate decisions, and then actively perceive the environment to gather that information (e.g., take a close-up photo of the paper, take a top-down view of the bag, or look behind the box to see the camera).

Approach

We propose a framework to enable a robot to perform grounded commonsense reasoning by iteratively identifying details it still needs to clarify about the scene before it can make a decision and actively gathering new observations to help answer those questions.

MessySurfaces Dataset

- Contains images of 308 objects across 70 real-world surfaces that need to be cleaned.

- Each object has a scene-level image, 5 close-up images, and a benchmark question and answer on the most appropriate way to clean the object up.

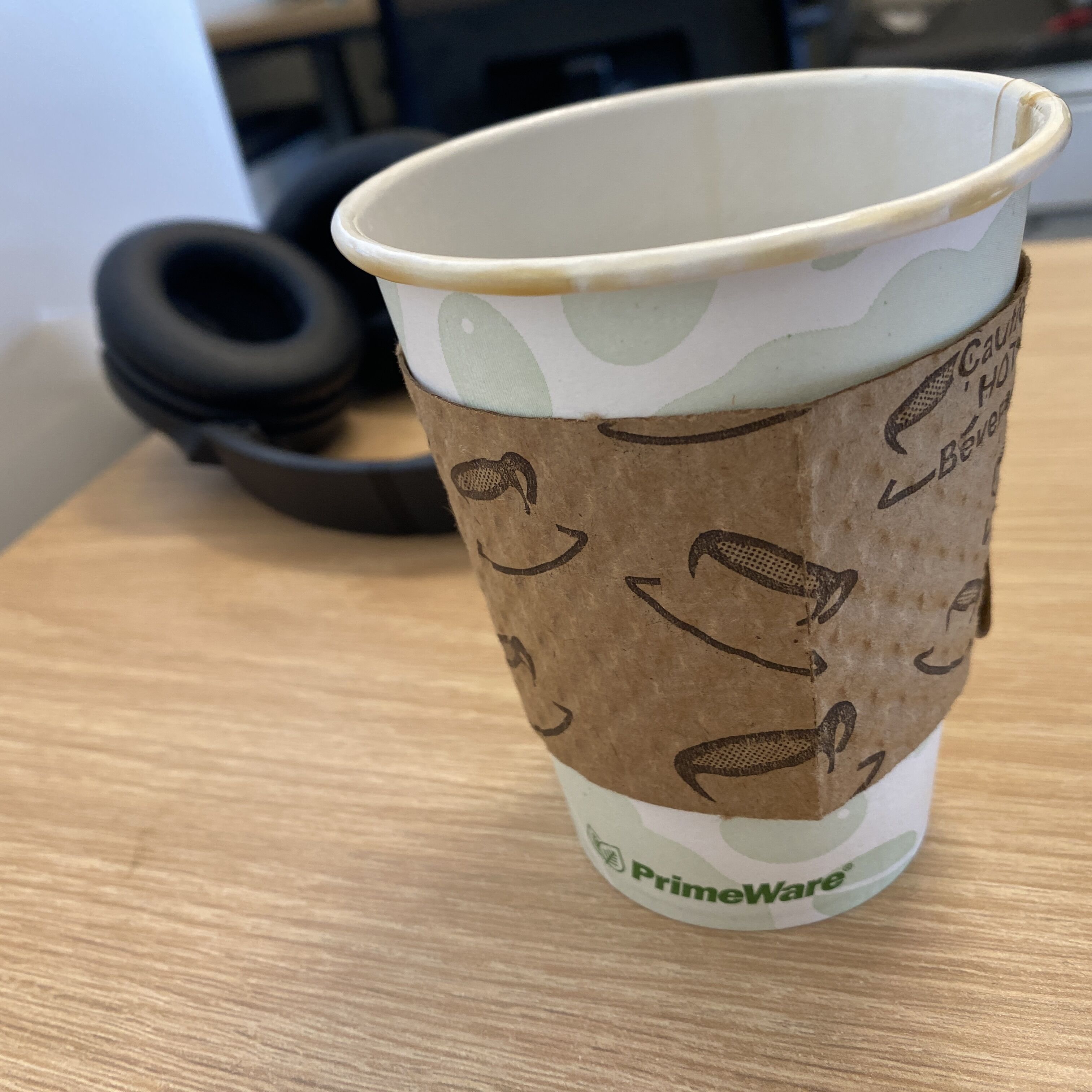

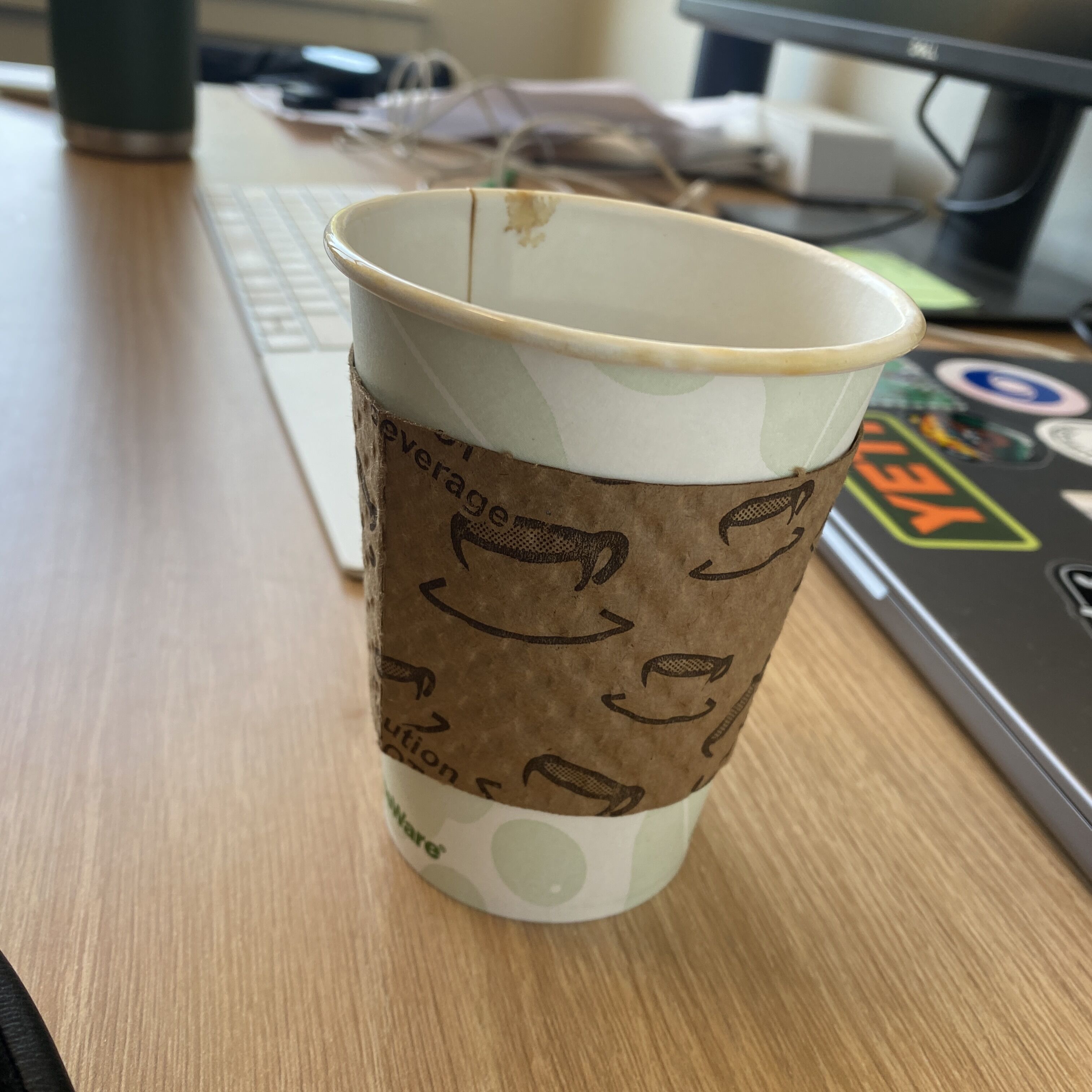

Example of images associated with the object `cup`

Robot Demonstrations

Robot cleaning a child's playroom

Robot cleaning a kitchen

Citation

@article{kwon2023toward,

title={Toward Grounded Commonsense Reasoning},

author={Kwon, Minae and Hu, Hengyuan and Myers, Vivek and Karamcheti, Siddharth and Dragan, Anca and Sadigh, Dorsa},

journal={arXiv preprint arXiv:2306.08651},

year={2023}

}